Spatial audio is no longer a niche feature. With formats like Dolby Atmos, 5.1 surround, and binaural mixes becoming standard across streaming, gaming, and XR, audiences now expect immersive soundscapes in every language. For localization teams, this raises a new challenge: how to match complex 3D audio environments across languages without breaking immersion.

Why Spatial Audio Complicates Localization

In stereo dubbing, dialogue sits in a relatively fixed position, typically centered in the mix. In spatial formats, dialogue becomes part of a dynamic soundfield. Voices may move with characters, exist above or behind the listener, or interact with environmental acoustics.

When localizing for spatial audio, teams must preserve:

- Object placement (where the voice exists in 3D space)

- Movement and timing (how the sound travels)

- Environmental interaction (reverb, reflections, distance)

A line that is technically correct in translation can still feel wrong if it’s placed incorrectly in the spatial mix.

Matching Dialogue Placement in 3D Space

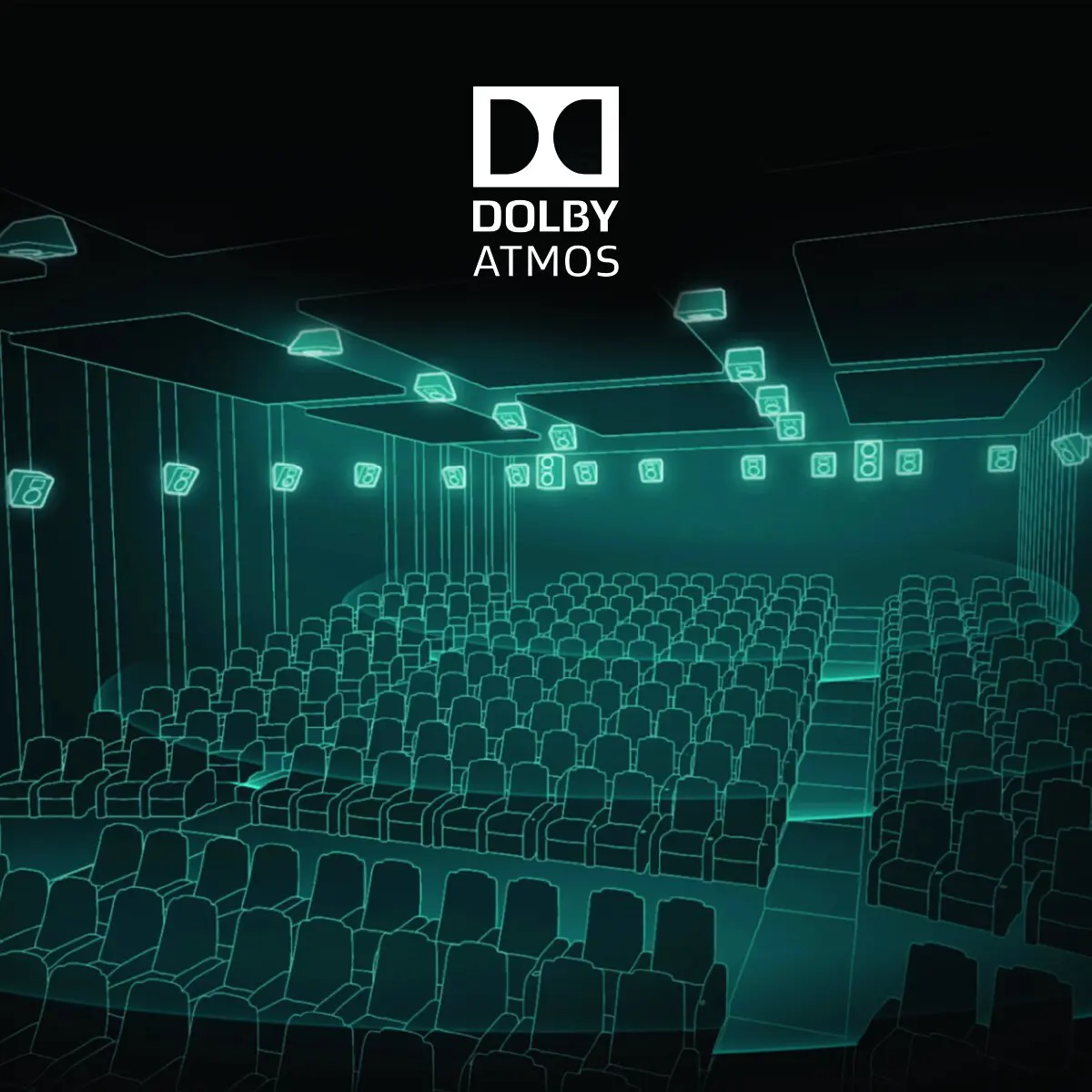

In formats like Dolby Atmos, dialogue is often treated as an object rather than a fixed channel. This allows precise positioning—but also introduces risk during localization.

If localized dialogue is not mapped to the same spatial coordinates as the original, issues can arise:

- Voices drifting away from on-screen characters

- Dialogue feeling disconnected from action

- Inconsistent spatial positioning across languages

Maintaining accurate object metadata is critical. Localization teams must ensure that every voice line retains its spatial data throughout the pipeline.

Reverb and Environmental Consistency

Reverb is one of the most overlooked aspects of spatial localization. In immersive audio, reverb is not just an effect—it defines the perceived space.

A voice recorded in a neutral booth must be processed to match:

- Room size and acoustics

- Distance from the listener

- Environmental context (indoor vs outdoor, open vs enclosed)

If reverb doesn’t match the original mix, localized dialogue can feel “pasted on” rather than integrated. This breaks immersion even if the performance itself is strong.

Format-Specific Challenges: Atmos, 5.1, and Binaural

Each spatial format introduces its own constraints.

Dolby Atmos allows object-based placement, offering flexibility but requiring precise metadata management.

5.1 surround relies on channel-based mixing, meaning dialogue placement must be carefully balanced within fixed speaker positions.

Binaural audio (for headphones) depends heavily on psychoacoustic cues, making small errors in timing or spatialization more noticeable.

Localization workflows must adapt to each format rather than applying a single approach.

The Role of QA in Spatial Localization

Quality control becomes significantly more complex in spatial audio. Traditional QA checks—sync, clarity, noise—are no longer enough.

Teams must evaluate:

- Spatial accuracy of dialogue

- Consistency across playback systems

- Listener comfort and immersion

- Downmix behavior (how spatial audio translates to stereo)

This often requires multiple monitoring setups, including speaker arrays and binaural headphone checks.

Why It Matters More Than Ever

As streaming platforms and AAA games push immersive audio as a standard feature, inconsistent localization becomes more noticeable. Audiences expect the same level of immersion in every language.

Spatial audio localization is not just about translation—it’s about recreating the entire sonic experience.

Studios that master this process can deliver truly global content that feels native in every market. Those that don’t risk breaking immersion at the exact moment they’re trying to enhance it.