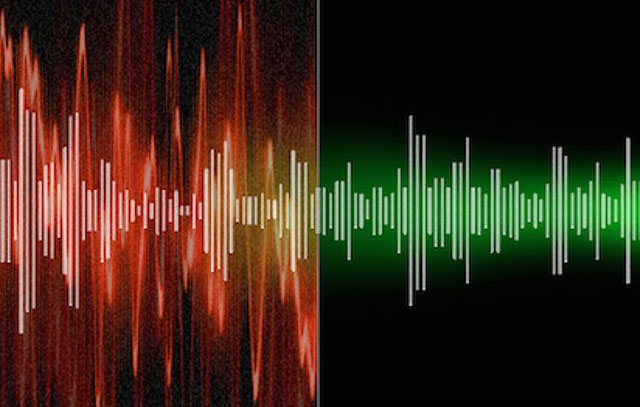

AI-powered audio cleanup tools have become a staple in modern post-production. With a few clicks, background noise disappears, room tone is smoothed out, and dialogue suddenly sounds “studio clean.” For fast-paced content pipelines and remote recordings, these tools feel like a miracle. But as their use spreads across film, advertising, games, and localization, an industry-wide problem is becoming harder to ignore: over-de-noising and the voice artifacts it leaves behind.

When Clean Audio Stops Sounding Human

Over-de-noising occurs when AI aggressively removes noise without fully understanding which elements are part of the voice itself. Breath, subtle sibilance, mouth movement, and natural room reflections are often misclassified as “unwanted sound.” Once removed, they don’t come back.

The result is familiar to many audio professionals:

- Voices sound thin or hollow

- Consonants smear or “chirp”

- Sibilance becomes brittle

- Speech develops a robotic, underwater quality

Ironically, these issues often become more noticeable after compression and loudness normalization—exactly the steps most content goes through before release. What sounded “clean” in isolation suddenly feels artificial in the final mix.

Why AI Cleanup Tools Are Prone to Overuse

The problem isn’t the technology itself—it’s how easily it can be misused. Many AI tools are designed to impress in seconds, offering dramatic before-and-after previews that encourage aggressive settings. Non-specialists may assume that “more noise removal” equals better quality, especially under tight deadlines.

In localization and voiceover work, this risk increases. Recordings often come from home studios or remote setups, where background noise varies. Instead of addressing the source—mic choice, room treatment, or performance technique—AI cleanup becomes a shortcut. Over time, that shortcut erodes vocal naturalness.

Why Artifacts Are Especially Dangerous in Localization

Voice artifacts are not just a technical issue—they’re a storytelling problem. In dubbed content, unnatural audio immediately breaks immersion. Viewers may not know why a voice sounds wrong, but they feel it.

Artifacts can also affect intelligibility. In languages with soft consonants or tonal nuances, over-processed audio can change meaning or reduce clarity. In games, repeated exposure to artifact-heavy dialogue becomes fatiguing, especially in long play sessions.

Worse, inconsistencies across languages can emerge. One market might receive clean, natural audio, while another gets over-processed dialogue, creating uneven quality in global releases.

Best Practices to Avoid Over-De-Noising

AI cleanup tools are not the enemy—they simply need restraint and context. Here are best practices used by experienced audio teams:

Start with the best possible recording.

No algorithm can fully fix poor mic placement or reflective rooms. Invest in capture quality first.

Use noise reduction in layers, not one pass.

Gentle reduction applied gradually preserves vocal texture better than aggressive single-pass cleanup.

Always A/B test in context.

Listen to processed dialogue within the full mix, not soloed. Artifacts often reveal themselves only in context.

Avoid “auto” settings as final output.

Presets are a starting point, not a finish line.

Preserve breaths and micro-detail.

These elements signal human presence. Removing them entirely often makes dialogue feel synthetic.

Combine AI with human judgment.

Experienced ears can identify when clarity turns into damage—AI cannot.

The Balance Between Clean and Credible

Perfect silence is not the goal of professional audio. Credibility is. Audiences expect voices to feel alive, textured, and emotionally grounded—even in highly produced content.

As AI cleanup tools become more powerful, the responsibility shifts to creators and post-production teams to use them wisely.